Microsoft ends support for Internet Explorer on June 16, 2022.

We recommend using one of the browsers listed below.

- Microsoft Edge(Latest version)

- Mozilla Firefox(Latest version)

- Google Chrome(Latest version)

- Apple Safari(Latest version)

Please contact your browser provider for download and installation instructions.

November 2, 2021

Demonstration of communication control technology that realizes natural remote control utilizing avatar robot "OriHime-D"

Expanding opportunities for employment and activity for people that have physical disabilities or difficulty in going out

NTT Corporation (NTT) announced today that it has realized low-latency remote robot operation with no control time lag using their communication control technology that enables optical transmission of multiple video streams without waiting for each other while efficiently utilizing the network resources of IOWN by dynamically controlling the transmission timing of video streams to suit the situation and characteristics of the avatar robot, OriHime-D *2, which is used at the " Avatar Robot Cafe DAWN ver.β *1" permanent experimental store opened by OryLab Inc. In the demonstration experiment, with the cooperation of NTT Claruty Corp. (NTT Claruty), a person with a disability became a pilot operating a robot to serve as service staff for the Avatar Robot Cafe. It was confirmed that stress-free operation and smooth movement through narrow passages, which had been difficult in the past, were possible. The results of this demonstration are expected to expand the scope of applications of avatar robots, including OriHime-D, and to further promote the employment and activities of people who are unable to go outside due to disability or illness.

1. Background

Aiming to realize the IOWN concept, NTT is supporting the Avatar Robot Cafe DAWN ver.β permanent experimental shop, opened by OryLab Inc. on June 21, 2021, and conducting joint demonstration experiments.*3 Its customers are served by avatar robots operated by people with intractable diseases or severe disabilities who have difficulty in going out,

At the Avatar Robot Cafe DAWN ver.β, a remote operator (pilot) works as service staff to provide drinks and other items via OriHime-D, a remote avatar robot. This service embodies a new way of working for people who are unable to go out due to disability or illness. In order to expand these activities and realize a future in which everyone can participate in society, it is important to use technology to overcome the problems that prevent people from participating in society and to expand the opportunities and environments in which avatar robots such as OriHime-D can play an active and positive role.

2. Outline of Initiatives

2.1 Communication control technology for realizing natural remote control

Human-operated avatar robots such as OriHime-D are expected to perform various activities flexibly and freely depending on the location or situation, from communication such as conversation and customer service to flexible and precise movements such as moving while avoiding people and obstacles. However, with conventional avatar robots, the large video delay between when the video capture of the situation surrounding the camera mounted on the avatar robot to when it is displayed on the monitor that the pilot is watching, is makes it difficult to perform detailed movements such as moving the robot while avoiding obstacles; the pilot feels stressed because he or she cannot operate the robot properly.

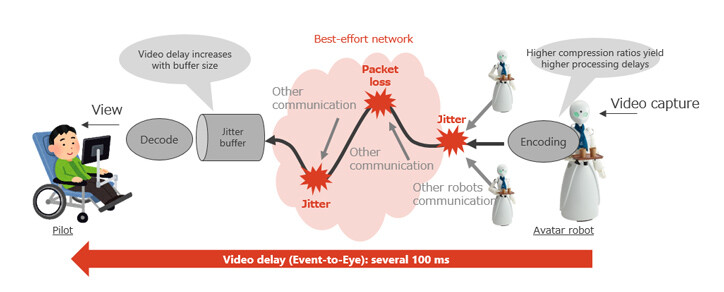

One of the reasons for the large video delay is the mechanism to absorb the quality fluctuations of the network. As most existing networks are of the best-effort type, video streams generated by avatar robots are multiplexed and transmitted to remote locations and congestion can occur in the network. In this configuration, since packet loss and jitter may occur on the communication path, mechanisms are provided to absorb the effects of congestion such as encoding/decoding processing and a jitter buffer in the robot and the operation application. These add-on mechanisms are the main cause of the processing delay; even when the communication quality is good, the video delay may be several hundreds of milliseconds. (Figure 1)

Figure 1: Causes of Increased Video Delay

Figure 1: Causes of Increased Video Delay

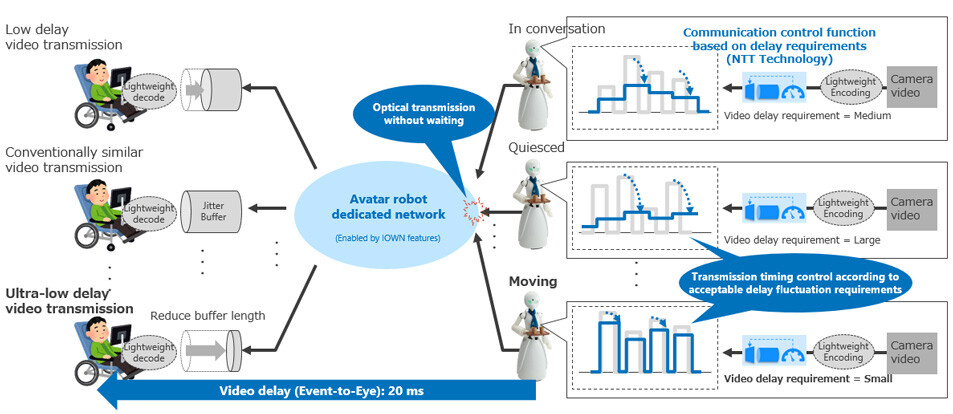

To solve this problem, we have developed a network-based control technology that enables optical transmission of multiple video streams without waiting by dynamically controlling the transmission timing according to the status and characteristics of each avatar robot. (Figure 2)

In the IOWN era, we are targeting End-End low-latency transmission by a dedicated network based on optical technologies. However, we also need to consider the efficient use of finite network resources such as wavelengths, rather than setting a dedicated network for each robot. For example, if there are multiple avatar robots at the same location, network sharing is far more efficient. However, since waitingoccur when video streams are multiplexed, it is important to control communication to reduce the effect.

Therefore, transmission timing is controlled so as to reduce the maximum waiting time of a video stream of an avatar robot that is being operated, while the waiting time requirement of avatar robots that are not being operated is relaxed. This technology makes it possible to provide the optimum communication quality for each video stream, making it possible to reduce the weight of encoding/decoding processing and to shrink the jitter buffer, which are the main causes of video delay, in avatar robots that require low-delay operations. In a system that applies this technology, the video delay (event-to-eye) between the video capture by the camera mounted on the avatar robot and its display on the monitor viewed by the pilot is reduced to approximately 20 milliseconds.

Figure 2: Configuration example of communication control technology for realizing natural remote control

Figure 2: Configuration example of communication control technology for realizing natural remote control

2.2 Demonstration experiment using OriHime-D in Avatar Robot Cafe DAWN ver.β

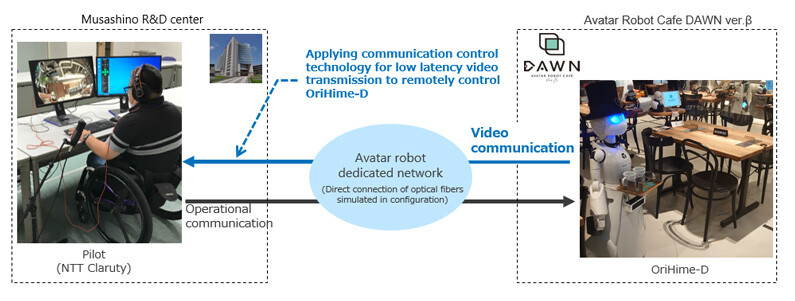

In order to confirm the effects of the proposed technology in actual use, we built a network for demonstration experiments between the Musashino R&D Center and the Avatar Robot Cafe DAWN ver.β. With the cooperation of NTT Claruty, we conducted experiments in which people with disabilities operated the avatar robot OriHime-D, and performed service work at the cafe. (Figure 3) In this environment, we compared the video delay yielded by using the Internet to transmits the OriHime-D video with that yielded by using the demonstration network. We confirmed that the video delay was reduced by approximately 400 ms (approximately 1/20).

Figure 3: Overview of the demonstration experiment

Figure 3: Overview of the demonstration experiment

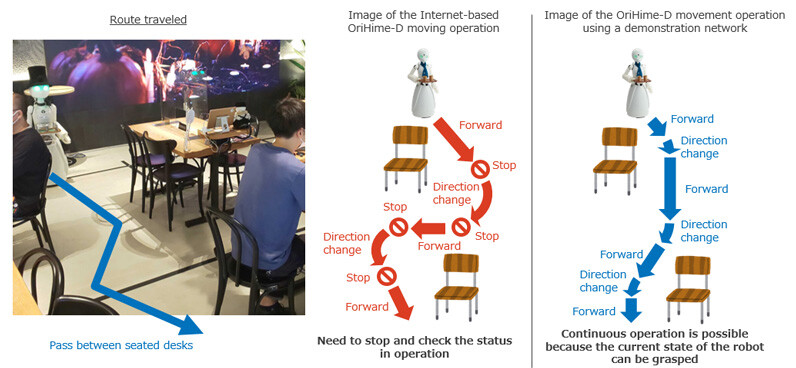

First, we measured the effects of reducing the video delay and improving avatar robot operability. (Figure 4) For this measurement, we assumed the scene in which the avator robot had to move along a specified route between tables with seated people. In OriHime-D which simulated a video delay via the Internet (Internet-based OriHime-D), the large video delay made it difficult to operate the robot smoothly because the operator had to generate movement commands from lagging video images. It was often necessary to stop the robot and check the actual status. In addition, it was difficult to smoothly rotate the robot to a new direction, so robot movements took too long. On the other hand, OriHime-D which used a demonstration experiment network (demonstration experiment OriHime-D), enabled the operator to control the robot in real time, enabling it to move forward and change direction continuously and very smoothly. The results confirmed that the same route could be traversed in half the time.

Figure 4: Difference in Moving Operation create by Large and Small Video Delay

Figure 4: Difference in Moving Operation create by Large and Small Video Delay

Next, we interviewed the pilot about the difference in the operation feeling between the Internet-based OriHime-D and the demonstration experiment OriHime-D. The opinion was that the Internet-based OriHime-D could not be stopped at the intended place and had to be operated repeatedly, which made the operator feel impatient and tired, but the demonstration experiment OriHime-D could be operated as expected. The comment was that pilot could get used to the operation itself quickly and it was easy to use.

Based on the above, we confirmed that the proposed technology can improve the operability of avatar robots by reducing the video delay, enabling smooth movement even in narrow passages such as between desks, and that it can reduce the stress that pilots experience when operating the robot. Based on these results, it is expected that the system will be applied to current cafe service duties, as well as usage scenes where people communicate with each other while traveling along complex routes (e.g., guidance at museums and customer service at clothing stores).

3. Future developments

Through this experiment, we were able to confirm the new possibility of avatar robots playing an active role in various situations and environments. In the future, based on evaluations of the demonstration experiments, we will proceed with further evaluations of applicability and research and development to suit new use cases.

This technology will be introduced at the "NTT R&D FORUM — Road to IOWN 2021"*4 to be held from November 16, 2021 to 19th.

*1*2About the Avatar Robot Cafe DAWN ver.β and OriHime-D

An experimental cafe operated and operated byOryLab Inc., in which people with intractable diseases such as ALS and severe disabilities who have difficulty going out, can work as service staff by remotely controlling avatar robots, OriHime and OriHime-D. OriHime-D is an avatar robot with a total height of approximately 120 cm that can be operated remotely to perform tasks involving physical labor, such as serving customers and carrying goods. "Avatar Robot Cafe" and "OriHime" are registered trademarks of OryLab Inc.

*3Joint demonstration experiment

Sponsorship of Avatar Robot Cafe DAWN ver.β

https://group.ntt/jp/newsrelease/2021/06/17/210617a.html

*4NTT R&D FORUM — Road to IOWN 2021

URL: https://www.rd.ntt/e/forum/

Contact Information

Nippon Telegraph and Telephone Corporation

Information Network Laboratory Group

Planning Department, Public Relations Section

E-mail:inlg-pr-pb-ml@hco.ntt.co.jp

Information is current as of the date of issue of the individual press release.

Please be advised that information may be outdated after that point.

NTT STORY

WEB media that thinks about the future with NTT