Microsoft ends support for Internet Explorer on June 16, 2022.

We recommend using one of the browsers listed below.

- Microsoft Edge(Latest version)

- Mozilla Firefox(Latest version)

- Google Chrome(Latest version)

- Apple Safari(Latest version)

Please contact your browser provider for download and installation instructions.

April 17, 2026

NTT, Inc.

NTT DOCOMO, Inc.

IOWN × VTuber Enable VR Meet & Greet with Virtual High-Five Experiences

High-quality, immersive VR events delivered in real time from existing studios to remote venues, reducing operational costs

News Highlights:

- NTT DOCOMO XR Studio (Photo 1)*1 and a remote venue were connected via IOWN APN*2, *3, enabling a high-quality, highly immersive VR Meet & Greet featuring VTubers in real time. A reduction in operational costs compared to conventional approaches was also confirmed.

- A VR haptic communication technology based on Self-Haptics*4, developed by NTT, was combined with commercially available VR headsets to demonstrate the sensation of high-fiving a virtual character.

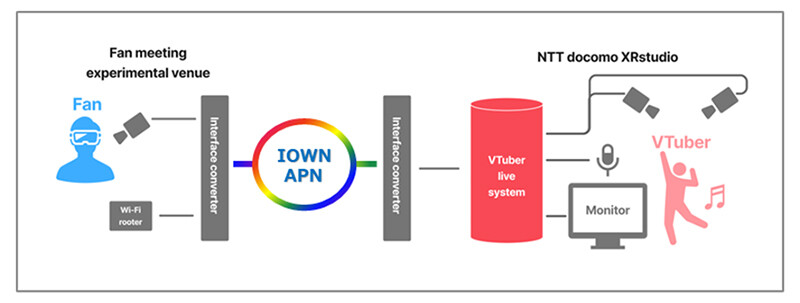

TOKYO — April 17, 2026 — NTT, Inc. (Headquarters: Chiyoda-ku, Tokyo; President and CEO: Akira Shimada; hereinafter "NTT") and NTT DOCOMO, Inc. (Headquarters: Chiyoda-ku, Tokyo; President and CEO: Yoshiaki Maeda; hereinafter "NTT DOCOMO") successfully conducted a joint experiment (Figure 1) on March 15, 2026, as a special event of the fan-participatory live experience "ConnectVFes," where multiple VTubers perform and interact with fans. The experiment demonstrated a high-quality, highly immersive VR Meet & Greet utilizing IOWN APN.

By connecting NTT DOCOMO's XR Studio in Odaiba, Minato-ku, Tokyo, with a remote experimental venue in Musashino, Tokyo, via IOWN APN (All-Photonics Connect*5), which provides high-capacity, low-latency, and stable transmission, a real-time VR Meet & Greet enabling interactive interaction with fans was realized.

In addition, by combining NTT's Self-Haptics-based VR haptic communication technology with commercially available VR headsets, it was demonstrated that fans and VTubers can communicate in real time in a virtual space while experiencing the sensation of a physical high-five.

Going forward, efforts will continue to create VTuber events and new entertainment services leveraging IOWN. Collaboration with regional studios and venues will also contribute to expanding business opportunities for a wide range of partner companies.

Photo 1. NTT DOCOMO XR Studio

Photo 1. NTT DOCOMO XR Studio

Figure 1. VTubers who participated in the joint experiment

Figure 1. VTubers who participated in the joint experiment

Background

The entertainment business, including anime and gaming, continues to expand globally and remains a field in which Japan holds strong competitiveness. In particular, the VTuber business, which reflects performers' individuality in virtual characters through motion capture technology*6, has seen significant growth. Demand for realistic live experiences and interactive engagement with performers is increasing both domestically and internationally.

On the other hand, event operators face several challenges. On-site events require the installation of temporary studios within venues to enable low-latency transmission of video and audio over short distances, resulting in high operational costs. Streaming over the internet often suffers from unstable network quality, making it difficult to deliver high-quality video such as Full HD or 4K and natural conversations. Pre-recorded live content lacks a sense of presence and fails to generate sufficient excitement.

Demonstration Details and Results

NTT DOCOMO's XR Studio in Odaiba, Minato-ku, Tokyo, and the experimental venue in Musashino, Tokyo, were connected via IOWN APN, which provides high capacity, low latency, and stable transmission (Figure 2). By leveraging NTT's Self-Haptics-based VR haptic communication technology, it was demonstrated that a high-quality, highly immersive Meet & Greet can be delivered in real time.

Figure 2. Configuration image of a VR Meet & Greet utilizing IOWN APN

Figure 2. Configuration image of a VR Meet & Greet utilizing IOWN APN

(1) Real-time, high-quality VR Meet & Greet enabled by low-latency communication, with approximately 20% reduction in operational costs

The high communication performance of IOWN APN, with one-way transmission latency averaging 0.04 milliseconds and ultra-low jitter - latency variation of just 0.05 microseconds, enabled the delivery of a real-time, high-quality VR Meet & Greet over a network connection. The experience was comparable to that of content provided from a temporary studio installed within the event venue. Participants wearing VR headsets were able to experience natural, real-time communication with virtual characters appearing directly in front of them.

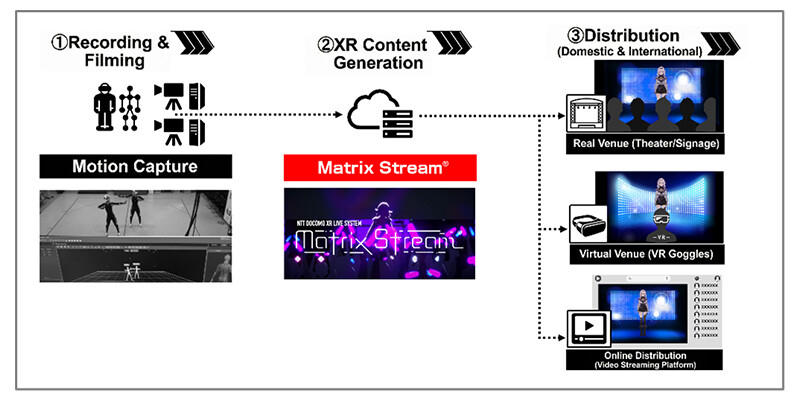

In addition, for the connection between studio equipment, including the VTuber live system "Matrix Stream" (Figure 3)*7, and venue equipment such as VR headsets and cameras, an interface conversion device equipped with HDMI signal conversion technology*8 was utilized. This demonstrated that video and audio can be transmitted to remote locations without degradation in quality or latency fluctuations caused by communication, even when locations are geographically separated.

Furthermore, compared to conventional on-site events requiring temporary studio installation within the venue, the need to assemble aluminum trusses for motion capture equipment such as lighting and cameras, as well as the relocation of high-precision cameras and servers, was eliminated. As a result, operational costs were reduced by approximately 20%. It was also confirmed that content can be delivered while maintaining the same level of operational quality as conventional approaches by utilizing existing studio facilities.

Figure 3. VTuber live system "Matrix Stream"

Figure 3. VTuber live system "Matrix Stream"

(2) Demonstration of Self-Haptics-based VR haptic communication technology, enabling the sensation of high-fiving a virtual character

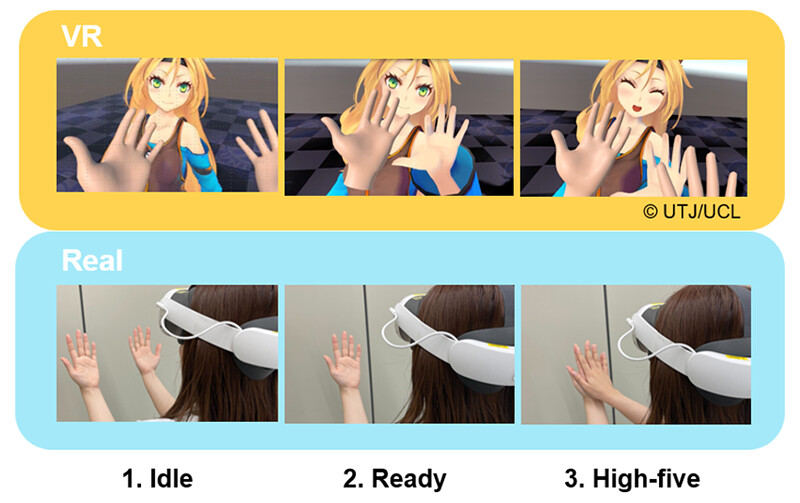

To address the growing demand for real-time, interactive engagement with virtual characters, NTT's Self-Haptics-based VR haptic communication technology was combined with commercially available VR headsets. This enabled a form of pseudo-contact communication in which users can experience the sensation of high-fiving a virtual character within a virtual environment (Figure 4).

Figure 4. High-five interaction with a virtual character

Figure 4. High-five interaction with a virtual character

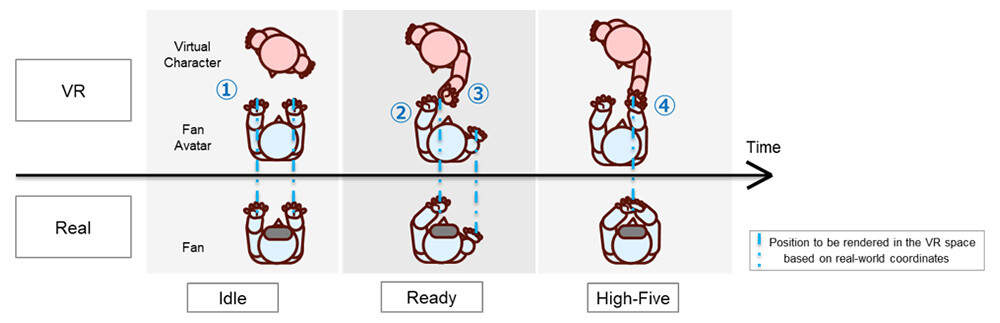

To apply Self-Haptics technology, which substitutes tactile and force sensations using one's own body, to contact-based communication in virtual environments (Figure 5), a visual effect that shifts the rendering position relative to the real-world position is used. This allows users to feel as if they are physically high-fiving a virtual character, even though they are actually touching their own hands.

- Virtual characters and user avatars are rendered in the virtual space based on real-world positional data

- During conversation in the virtual space, the user's avatar's left hand is gradually rendered with an outward offset

- The virtual character's left hand synchronizes with the user's left hand

- When the user brings both hands together in the real world, a high-five with the virtual character is achieved in the virtual space

Figure 5. Mechanism for experiencing the sensation of a physical high-five with a virtual character

Figure 5. Mechanism for experiencing the sensation of a physical high-five with a virtual character

(3) Preliminary Results

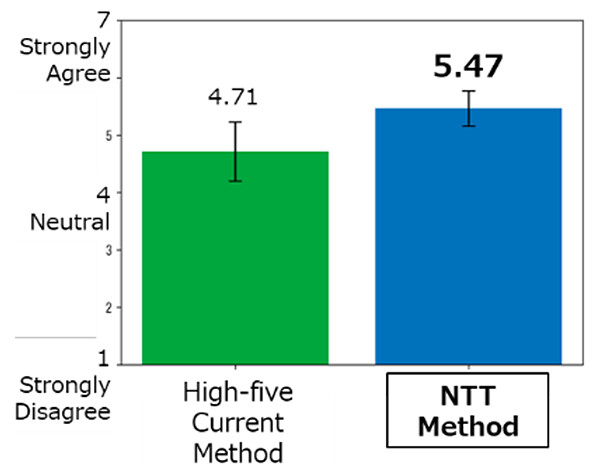

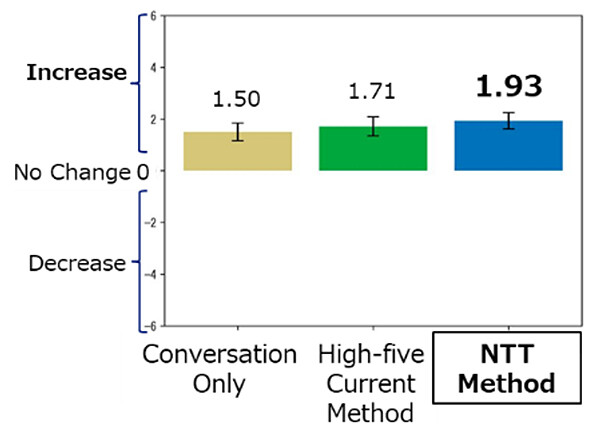

According to a survey conducted with 39 participants in the VR Meet & Greet experiment, NTT's technology received high evaluations in areas such as the sensation of high-fiving and the sense of unity with virtual characters (Figures 6 to 8).

In addition, all participants reported satisfaction levels of at least "somewhat satisfied" with the Meet & Greet experience, and expressed a willingness to participate again. These results confirm the value and effectiveness of VR Meet & Greet as a new form of entertainment experience.

Figure 6. VR Meet & Greet experiment scene

Figure 6. VR Meet & Greet experiment scene

Figure 7. Perceived sensation of high-fiving

Figure 7. Perceived sensation of high-fiving

Figure 8. Sense of closeness to the virtual character (before vs. after)

Figure 8. Sense of closeness to the virtual character (before vs. after)

Roles and Responsibilities

- NTT: Development of VR haptic communication technology based on Self-Haptics, with patent applications filed; technical support for HDMI conversion technology; and exploration of VTuber events leveraging IOWN technologies

- NTT DOCOMO: Provision of VTuber live performances and Meet & Greet; provision and operation of studio and venue equipment

Future Developments

XR business initiatives leveraging advanced technologies, including IOWN, will be rolled out at studios and event venues to provide services that enable real-time, interactive engagement between fans and virtual characters performed by VTubers.

In addition to high-quality video and audio transmission enabled by IOWN, the creation of experiences that simulate physical touch, such as the sensation of high-five, will be pursued to realize new forms of entertainment that foster a sense of connection with communities and society.

At the same time, services will be expanded for partner companies that hold diverse content, including VTubers, anime, and games. Collaboration with studios and venues in real-time interaction will contribute to the expansion of global business opportunities.

[Glossary]

*1NTT DOCOMO XR Studio (Odaiba, Minato-ku, Tokyo)

By combining high-precision VICON cameras with the VTuber live system "Matrix Stream," the studio enables simultaneous tracking of up to six performers. This supports the production of a wide range of content, including singing, dancing, and variety programs. In addition, real-time direction synchronized with lighting and audio systems is supported, enabling highly immersive events that integrate physical and virtual elements, such as live performances, talk events, and Meet & Greet.

*2IOWN (Innovative Optical and Wireless Network)

The IOWN concept is a network and information processing infrastructure, including terminals, designed to optimize both individuals and the overall system based on all types of information. It leverages photonics-centered innovative technologies to deliver high-speed, high-capacity communications and vast computational resources.

IOWN concept: https://group.ntt/en/group/iown/

*3IOWN APN (All-Photonics Network)

The All-Photonics Network (APN) is one of the major technological fields that comprise IOWN. It introduces photonics-based technologies into everything from devices to networks and aims to realize extremely low power consumption, high speed, high capacity, and low latency transmission through wavelength networks that provide end-to-end optical wavelength paths.

IOWN APN: https://group.ntt/en/group/iown/function/apn.html

*4Self-Haptics

A technology that generates or substitutes tactile and force sensations by utilizing the user's own body movements, skin, and musculoskeletal system. A key characteristic is the concept of treating the human body itself as a "haptic device."

*5All-Photonics Connect

All-Photonics Connect provides point-to-point connections between designated locations and enables high-speed, high-capacity, low-latency, and energy-efficient communications at 10 Gbps, 100 Gbps, 400 Gbps, and 800 Gbps.

- All-Photonics Connect: https://business.ntt-east.co.jp/service/koutaiikiaccess/ (Japanese)

*6Motion Capture Technology

Motion capture technology records human movements accurately using sensors or cameras and reproduces them as digital data. By capturing real-world movements directly into digital environments, it enables realistic and immersive expressions as well as highly precise motion analysis.

*7VTuber Live System "Matrix Stream"

This system uses motion capture technology to acquire performers' movements in real time and generate corresponding movements for virtual characters in a virtual environment. It enables real-time delivery of XR-based live performances and Meet & Greet to physical venue screens, virtual venues in metaverse environments, and devices such as PCs and smartphones.

*8HDMI Signal Conversion Technology

A technology that converts HDMI signals into long-distance transmission signals without compression while maintaining low latency.

- HDMI signal conversion technology: https://group.ntt/en/newsrelease/2024/10/08/241008b.html

About NTT

NTT is a leading global technology innovator, providing a broad range of services to both consumers and businesses. As a mobile operator and provider of infrastructure, networks, and services, NTT is dedicated to promoting a sustainable future through cutting-edge innovations. Our portfolio includes business consulting, AI-powered solutions, application services, global networks, cybersecurity, data center and edge computing, all supported by our deep global industry expertise. Generating over $90 billion in revenue and employing 340,000 professionals, we allocate 30% of our annual profits to fundamental research and development. With operations spanning more than 70 countries and regions, our clients include over 75% of Fortune Global 100 companies, alongside thousands of enterprises, government organizations, and millions of consumers.

Media contacts

NTT, Inc.

NTT IOWN Integrated Innovation Center

Public Relations

Inquiry Form

NTT DOCOMO, Inc.

Company Corporate Department

Information is current as of the date of issue of the individual press release.

Please be advised that information may be outdated after that point.

NTT STORY

WEB media that thinks about the future with NTT